What ‘Skill’ Really Means: From Tasks to Competence

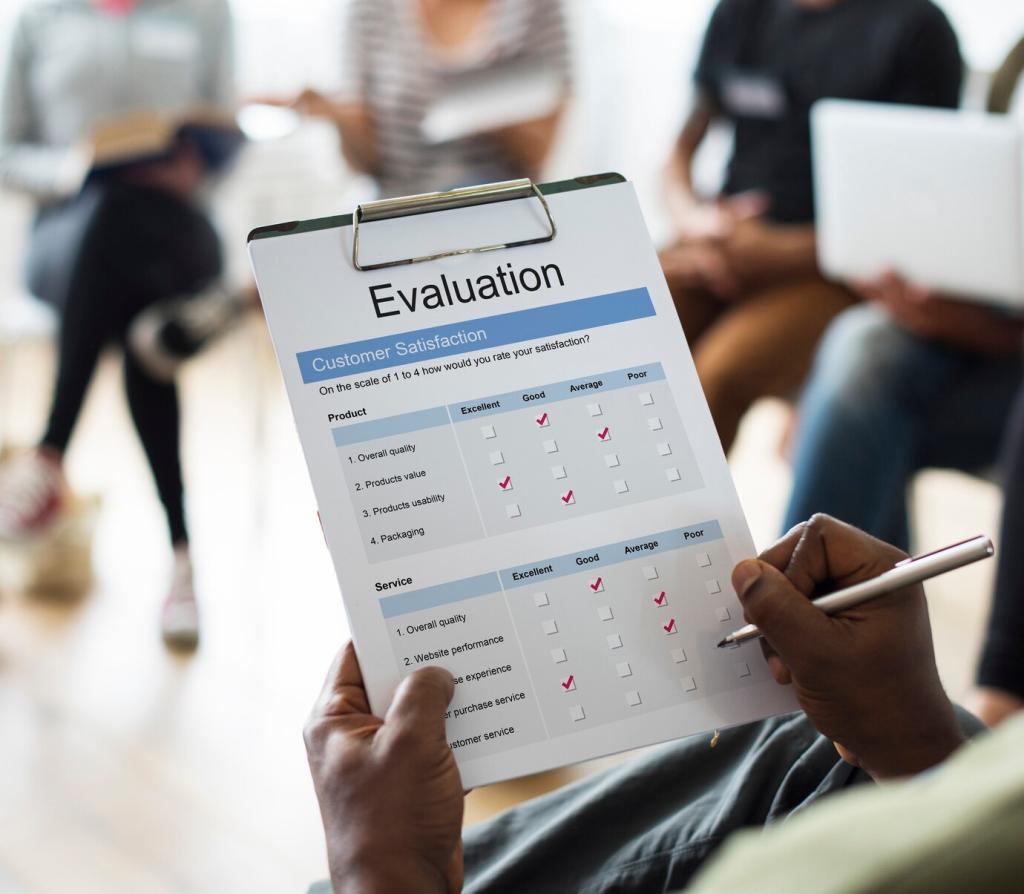

Interview high performers, observe workflows, and collect artifacts like code reviews, client emails, or design drafts. Convert tasks into skills by asking what great performance looks like and how it is recognized. Build an assessment blueprint that mirrors genuine work.

What ‘Skill’ Really Means: From Tasks to Competence

Replace vague labels like “communication” with behaviorally anchored descriptions tied to context. Describe what a skilled professional does, says, and produces at different proficiency levels. Clear behaviors reduce disagreement, enable consistent scoring, and guide meaningful feedback.